As Israel uses US-made AI models in war, concerns arise about tech’s role in who lives and who dies

The rise of AI

As U.S. tech titans ascend to prominent roles under President Donald Trump, the AP’s findings raise questions about Silicon Valley’s role in the future of automated warfare. Microsoft expects its partnership with the Israeli military to grow, and what happens with Israel may help determine the use of these emerging technologies around the world.

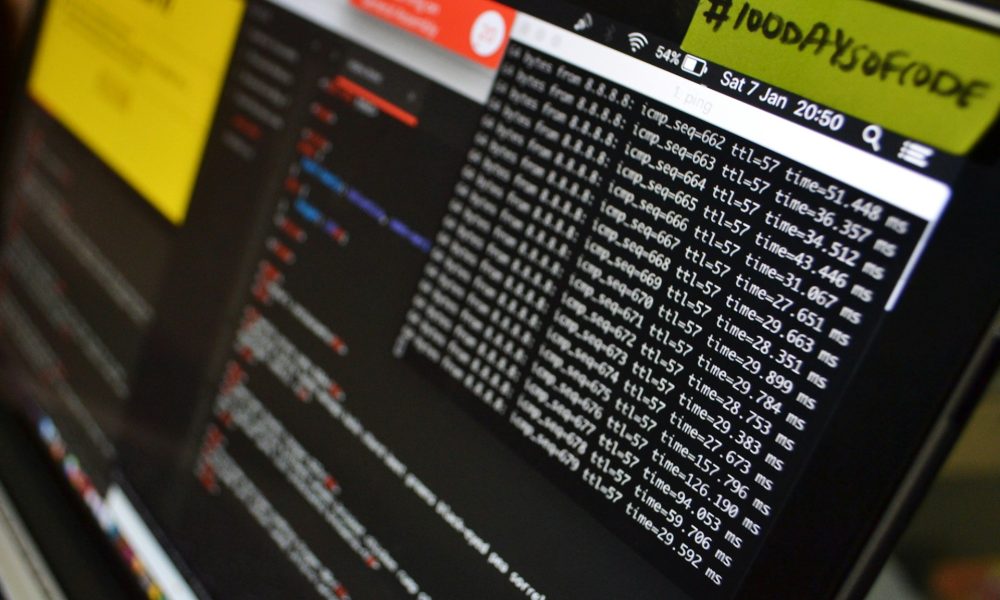

The Israeli military’s usage of Microsoft and OpenAI artificial intelligence spiked last March to nearly 200 times higher than before the week leading up to the Oct. 7 attack, the AP found in reviewing internal company information. The amount of data it stored on Microsoft servers doubled between that time and July 2024 to more than 13.6 petabytes — roughly 350 times the digital memory needed to store every book in the Library of Congress. Usage of Microsoft’s huge banks of computer servers by the military also rose by almost two-thirds in the first two months of the war alone.

Israel’s goal after the attack that killed about 1,200 people and took over 250 hostages was to eradicate Hamas, and its military has called AI a “game changer” in yielding targets more swiftly. Since the war started, more than 50,000 people have died in Gaza and Lebanon and nearly 70% of the buildings in Gaza have been devastated, according to health ministries in Gaza and Lebanon.

The AP’s investigation drew on interviews with six current and former members of the Israeli army, including three reserve intelligence officers. Most spoke on condition of anonymity because they were not authorized to discuss sensitive military operations.

The AP also interviewed 14 current and former employees inside Microsoft, OpenAI, Google and Amazon, most of whom also spoke anonymously for fear of retribution. Journalists reviewed internal company data and documents, including one detailing the terms of a $133 million contract between Microsoft and Israel’s Ministry of Defense.

The Israeli military says its analysts use AI-enabled systems to help identify targets but independently examine them together with high-ranking officers to meet international law, weighing the military advantage against the collateral damage. A senior Israeli intelligence official authorized to speak to the AP said lawful military targets may include combatants fighting against Israel, wherever they are, and buildings used by militants. Officials insist that even when AI plays a role, there are always several layers of humans in the loop.

“These AI tools make the intelligence process more accurate and more effective,” said an Israeli military statement to the AP. “They make more targets faster, but not at the expense of accuracy, and many times in this war they’ve been able to minimize civilian casualties.”

The Israeli military declined to answer detailed written questions from the AP about its use of commercial AI products from American tech companies.

Microsoft declined to comment for this story and did not respond to a detailed list of written questions about cloud and AI services provided to the Israeli military. In a statement on its website, the company says it is committed “to champion the positive role of technology across the globe.” In its 40-page Responsible AI Transparency Report for 2024, Microsoft pledges to manage the risks of AI throughout development “to reduce the risk of harm,” and does not mention its lucrative military contracts.

Advanced AI models are provided through OpenAI, the maker of ChatGPT, through Microsoft’s Azure cloud platform, where they are purchased by the Israeli military, the documents and data show. Microsoft has been OpenAI's largest investor. OpenAI said it does not have a partnership with Israel's military, and its usage policies say its customers should not use its products to develop weapons, destroy property or harm people. About a year ago, however, OpenAI changed its terms of use from barring military use to allowing for “national security use cases that align with our mission.”

The human toll of AI

It’s extremely hard to identify when AI systems enable errors because they are used with so many other forms of intelligence, including human intelligence, sources said. But together they can lead to wrongful deaths.

In November 2023, Hoda Hijazi was fleeing with her three young daughters and her mother from clashes between Israel and Hamas ally Hezbollah on the Lebanese border when their car was bombed.

Before they left, the adults told the girls to play in front of the house so that Israeli drones would know they were traveling with children. The women and girls drove alongside Hijazi’s uncle, Samir Ayoub, a journalist with a leftist radio station, who was caravanning in his own car. They heard the frenetic buzz of a drone very low overhead.

Soon, an airstrike hit the car Hijazi was driving. It careened down a slope and burst into flames. Ayoub managed to pull Hijazi out, but her mother — Ayoub’s sister — and the three girls — Rimas, 14, Taline, 12, and Liane, 10 — were dead.

Before they left their home, Hijazi recalled, one of the girls had insisted on taking pictures of the cats in the garden “because maybe we won’t see them again.”

In the end, she said, “the cats survived and the girls are gone.”

Video footage from a security camera at a convenience store shortly before the strike showed the Hijazi family in a Hyundai SUV, with the mother and one of the girls loading jugs of water. The family says the video proves Israeli drones should have seen the women and children.

The day after the family was hit, the Israeli military released video of the strike along with a package of similar videos and photos. A statement released with the images said Israeli fighter jets had “struck just over 450 Hamas targets." The AP’s visual analysis matched the road and other geographical features in the Israeli military video to satellite imagery of the location where the three girls died, 1 mile (1.7 kilometers) from the store.

An Israeli intelligence officer told the AP that AI has been used to help pinpoint all targets in the past three years. In this case, AI likely pinpointed a residence, and other intelligence gathering could have placed a person there. At some point, the car left the residence.

Humans in the target room would have decided to strike. The error could have happened at any point, he said: Previous faulty information could have flagged the wrong residence, or they could have hit the wrong vehicle.

The AP also saw a message from a second source with knowledge of that airstrike who confirmed it was a mistake, but didn’t elaborate.

A spokesperson for the Israeli military denied that AI systems were used during the airstrike itself, but refused to answer whether AI helped select the target or whether it was wrong. The military told the AP that officials examined the incident and expressed “sorrow for the outcome.”

How it works

Microsoft and the San Francisco-based startup OpenAI are among a legion of U.S. tech firms that have supported Israel’s wars in recent years.

Google and Amazon provide cloud computing and AI services to the Israeli military under “Project Nimbus,” a $1.2 billion contract signed in 2021 when Israel first tested out its in-house AI-powered targeting systems. The military has used Cisco and Dell server farms or data centers. Red Hat, an independent IBM subsidiary, also has provided cloud computing technologies to the Israeli military, and Palantir Technologies, a Microsoft partner in U.S. defense contracts, has a “strategic partnership” providing AI systems to help Israel’s war efforts.

Google said it is committed to responsibly developing and deploying AI “that protects people, promotes global growth, and supports national security.” Dell provided a statement saying the company commits to the highest standards in working with public and private organizations globally, including in Israel. Red Hat spokesperson Allison Showalter said the company is proud of its global customers, who comply with Red Hat's terms to adhere to applicable laws and regulations.

Palantir, Cisco and Oracle did not respond to requests for comment. Amazon declined to comment.

The Israeli military uses Microsoft Azure to compile information gathered through mass surveillance, which it transcribes and translates, including phone calls, texts and audio messages, according to an Israeli intelligence officer who works with the systems. That data can then be cross-checked with Israel’s in-house targeting systems and vice versa.

He said he relies on Azure to quickly search for terms and patterns within massive text troves, such as finding conversations between two people within a 50-page document. Azure also can find people giving directions to one another in the text, which can then be cross-referenced with the military’s own AI systems to pinpoint locations.

The Microsoft data AP reviewed shows that since the Oct. 7 attack, the Israeli military has made heavy use of transcription and translation tools and OpenAI models, although it does not detail which. Typically, AI models that transcribe and translate perform best in English. OpenAI has acknowledged that its popular AI-powered translation model Whisper, which can transcribe and translate into multiple languages including Arabic, can make up text that no one said, including adding racial commentary and violent rhetoric.

“Should we be basing these decisions on things that the model could be making up?” said Joshua Kroll, an assistant professor of computer science at the Naval Postgraduate School in Monterey, California, who spoke to the AP in his personal capacity, not reflecting the views of the U.S. government.

The Israeli military said any phone conversation translated from Arabic or intelligence used in identifying a target has to be reviewed by an Arabic-speaking officer.

Errors can still happen for many reasons involving AI, said Israeli military officers who have worked with the targeting systems and other tech experts. One intelligence officer said he had seen targeting mistakes that relied on incorrect machine translations from Arabic to Hebrew.

The Arabic word describing the grip on the launch tube for a rocket-propelled grenade is the same as the word for “payment.” In one instance the machine translated it wrong, and the person verifying the translation initially didn’t catch the error, he said, which could have added people speaking about payments to target lists. The officer was there by chance and caught the problem, he said.

Intercepted phone calls tied to a person’s profile also include the time the person called and the names and numbers of those on the call. But it takes an extra step to listen to and verify the original audio, or to see a translated transcript.

Sometimes the data attached to people’s profiles is wrong. For example, the system misidentified a list of high school students as potential militants, according to the officer. An Excel spreadsheet attached to several people’s profiles titled “finals” in Arabic, contained at least 1,000 students’ names on an exam list in one area of Gaza, he said. This was the only piece of incriminating evidence attached to people’s files, he said, and had he not caught the mistake, those Palestinians could have been wrongly flagged.

He said he also worried that young officers, some still younger than 20, under pressure to find targets quickly with the help of AI would jump to conclusions.

AI alone could lead to the wrong conclusion, said another soldier who worked with the targeting systems. For example, AI might flag a house owned by someone linked to Hamas who does not live there. Before the house is hit, humans must confirm who is actually in it, he said.

“Obviously there are things that I live peacefully with and things that I could have done better in some targeted attacks that I’m responsible for,” the soldier told the AP. “It’s war, things happen, mistakes happen, we are human.”

Tal Mimran served 10 years as a reserve legal officer for the Israeli military, and on three NATO working groups examining the use of new technologies, including AI, in warfare. Previously, he said, it took a team of up to 20 people a day or more to review and approve a single airstrike. Now, with AI systems, the military is approving hundreds a week.

Mimran said over-reliance on AI could harden people’s existing biases.

“Confirmation bias can prevent people from investigating on their own,” said Mimran, who teaches cyber law policy. “Some people might be lazy, but others might be afraid to go against the machine and be wrong and make a mistake.”

Advertising by Adpathway